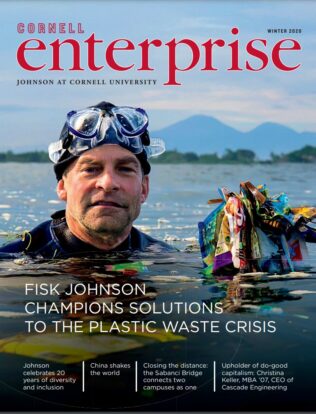

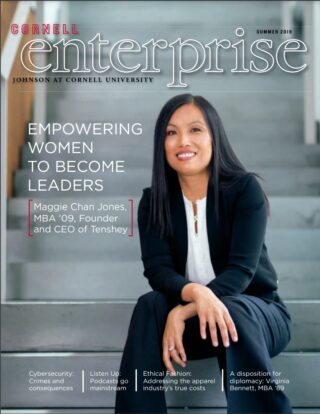

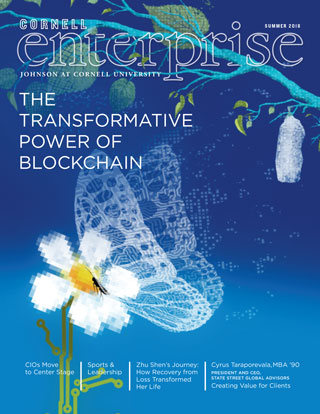

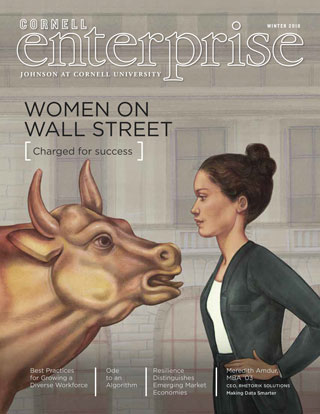

Cornell Enterprise is published twice a year by the Samuel Curtis Johnson Graduate School of Management at Cornell University. In addition to a print publication, articles are published regularly on Cornell SC Johnson’s central hub.

All newly created PDFs on this website are accessible, for an accommodation for these PDFs please contact SC Johnson College Accessibility Team